Meta Description:

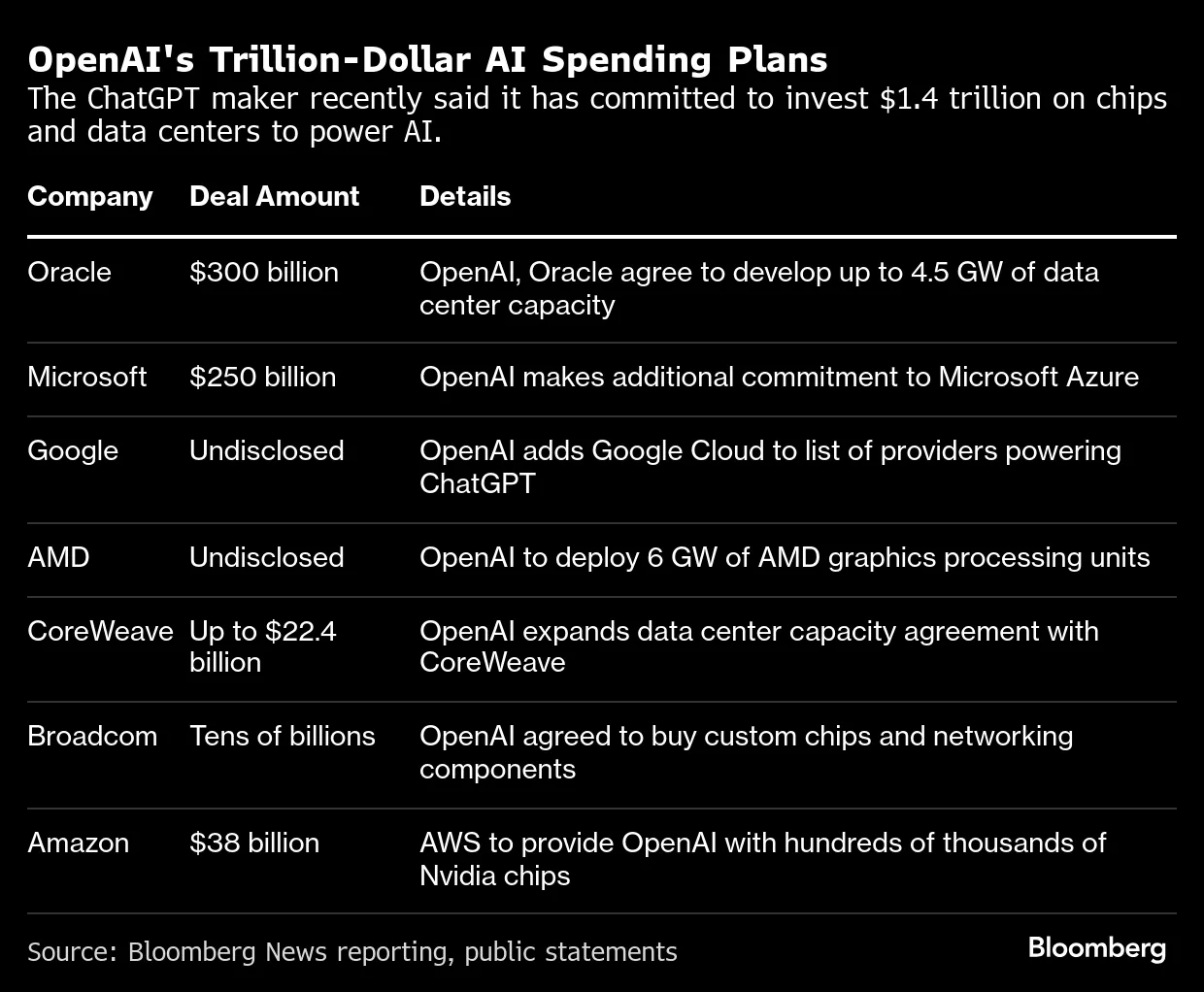

Amazon’s stock soars after sealing a $38 billion cloud partnership with OpenAI. Discover how Nvidia GPUs and AWS infrastructure could reshape the global AI market and redefine tech investment trends.

Amazon’s $38 Billion Partnership with OpenAI: A New Era for AI Infrastructure and Cloud Dominance

Introduction: The Day Wall Street Turned Its Eyes to AI Infrastructure

On November 3, 2025, the technology and investment worlds witnessed a seismic shift. Amazon.com Inc. (NASDAQ: AMZN) announced a monumental $38 billion partnership with OpenAI, the creator of ChatGPT, signaling one of the most significant strategic alliances in the modern AI era. The deal, structured over seven years, will see OpenAI leveraging Amazon Web Services (AWS) to power its next generation of artificial intelligence models using hundreds of thousands of Nvidia GPUs—the gold standard for AI computation.

Within hours of the announcement, Amazon’s stock surged more than 5%, hitting a record high and propelling the company’s market capitalization beyond $2.8 trillion. Analysts quickly labeled the agreement a "watershed moment" for both the AI infrastructure market and the broader tech stock landscape, placing AWS back in the center of the AI arms race dominated by Microsoft Azure and Google Cloud.

This partnership isn’t merely about cloud contracts—it’s about control over the future of artificial intelligence itself. As OpenAI’s appetite for compute power grows exponentially, the alliance underscores a strategic realignment in the trillion-dollar AI ecosystem.

The Market Context: How the AI Infrastructure Race Is Redefining Tech Valuations

The rise of artificial intelligence (AI) has not only reshaped technology but has also redrawn the boundaries of market competition. Since 2023, when ChatGPT captured global attention, the demand for AI infrastructure—data centers, GPUs, and high-performance cloud services—has exploded.

AWS, Microsoft Azure, and Google Cloud have become the pillars of this AI revolution, each vying to become the default platform for model training and deployment. Yet despite Amazon’s cloud dominance for over a decade, it has faced growing competition from Microsoft’s close relationship with OpenAI, which fueled Azure’s growth and boosted Microsoft’s stock performance in 2023–2024.

This latest Amazon–OpenAI partnership appears to rebalance the scales. By securing a multibillion-dollar contract that includes the provisioning of Nvidia H200 and B200 chips, Amazon positions itself at the forefront of next-generation AI computing infrastructure—an arena expected to exceed $400 billion in annual spending by 2030, according to Goldman Sachs projections.

Investor Sentiment: Why Amazon Stock Is Reacting So Strongly

The stock market’s reaction to the deal was immediate and emphatic. Within a single trading session, Amazon shares jumped 4.8%, adding roughly $120 billion to its market cap. Institutional investors interpreted the move as validation of AWS’s technical superiority and operational readiness to handle massive-scale AI workloads.

Moreover, this surge wasn’t fueled by hype alone. Analysts from Morgan Stanley, JP Morgan, and Wedbush have highlighted that the OpenAI partnership provides Amazon with guaranteed long-term revenue streams, potentially adding $5–6 billion annually to AWS’s top line once the infrastructure ramp-up begins in 2026.

For investors, this reinforces a critical narrative: while AI software companies attract attention, the real winners are infrastructure providers—the firms that supply the compute backbone enabling AI innovation. Nvidia, AWS, and Microsoft Azure are at the heart of this dynamic, and this partnership cements Amazon’s claim to that elite group.

The Bigger Picture: How Nvidia Chips Became the New Oil of the AI Economy

At the center of this alliance stands Nvidia Corporation (NASDAQ: NVDA)—the supplier of the ultra-powerful GPUs that drive every major AI system today. These chips, particularly the H200 and B200 Tensor Core GPUs, are designed to handle enormous model-training workloads, enabling AI systems like GPT-5 and beyond to process trillions of parameters efficiently.

As part of the deal, AWS will deploy hundreds of thousands of Nvidia GPUs across its global data centers, effectively becoming one of the world’s largest GPU clusters dedicated to artificial intelligence. This investment aligns with Nvidia’s mission to dominate AI computation, further consolidating its market position after a record-breaking year that saw its stock climb more than 220% year-to-date.

The partnership therefore represents a three-way alliance of innovation:

-

Amazon, providing scalable cloud infrastructure.

-

OpenAI, pushing the boundaries of generative AI.

-

Nvidia, supplying the computational power to make it all possible.

This combination could accelerate AI research and deployment cycles by years—compressing what used to take months of compute time into weeks or even days.

Suggested Internal and External SEO Links (for this section):

Internal links:

-

Link to your existing article on “How Nvidia Chips Are Powering the AI Revolution”

-

Link to “AWS vs Azure: The Battle for Cloud Dominance in the AI Era”

External links (high-authority sources):

Deal Details: OpenAI + Amazon Web Services (AWS) + Nvidia Corporation

1. What the Deal Entails

-

The partnership between OpenAI and Amazon Web Services spans approximately US $38 billion over a term of about seven years. (Reuters)

-

Under the agreement, AWS will supply OpenAI with access to hundreds of thousands of Nvidia GPUs—not just one-off servers but massive clustered infrastructure suited for large-scale AI model training and inference. (mint)

-

The deal gives OpenAI the ability to begin using AWS compute infrastructure immediately, with the full target capacity slated for deployment by the end of 2026 and with options for further expansion beyond 2027. (The Peninsula Newspaper)

-

The GPUs specified include next-generation accelerators from Nvidia—specifically the “GB200” and “GB300” AI chips (also referenced as Blackwell architecture) that AWS is planning to cluster for OpenAI’s use. (mint)

-

AWS will also deploy custom infrastructure: for example, the use of EC2 UltraServers and specialized interconnect architecture to enable large-scale GPU clustering optimized for AI workloads. (headlineadda.com)

2. Strategic Context for Each Company

-

For OpenAI: The deal represents a major step in scaling its AI infrastructure. With the ambition of building far more powerful AI models and deploying them at global scale, OpenAI needs massive, reliable compute power. This contract helps diversify its cloud provider footprint (after heavy reliance on e.g. Microsoft Corporation) and anchors a large compute commitment. (Reuters)

-

For Amazon (AWS): Securing a high-profile, multi-billion-dollar deal cements its position in the “AI infrastructure arms race”. AWS had faced increasing competition from Azure and Google Cloud in winning large AI compute workloads; this deal signals a strong win. (mint)

-

For Nvidia: Though not the contracting party, Nvidia is a central beneficiary. The agreement hinges on GPUs from Nvidia, reinforcing its dominance in AI-centric hardware. Demand for its advanced chips is bolstered by such large-scale commitments. (theregister.com)

3. Economic & Financial Analysis

-

Revenue Implications: For AWS, the deal potentially translates into a predictable, long-term revenue stream over the term of the agreement. While not all details (such as pricing, margins, or cost structure) are public, the magnitude is large enough to influence investor sentiment.

-

Cost & Investment: On OpenAI’s side, the deal underscores massive operating cost commitments. The need to run hundreds of thousands of GPUs, manage electricity, cooling, data centre real estate, and network infrastructure means the economics are non-trivial. Analysts highlight concerns about sustainability if revenue growth doesn’t keep pace. (spokesman.com)

-

Competitive Margins & Cloud-War Dynamics: By locking in a large customer (OpenAI), AWS may gain leverage in the cloud infrastructure market. The increased scale could yield operational efficiencies, but also exposes AWS to risk if AI workloads don’t transition to profitable business models as expected.

-

Chip Supply Dynamics: Because Nvidia is supplying the hardware, and demand is sharply increasing, supply constraints or pricing power could impact margins for AWS or OpenAI. The deal amplifies the value of having access to next-gen chips and interconnects—things that may create competitive moats.

-

Stock Market Reaction & Market Signalling: As noted, the announcement led to an uptick in Amazon’s stock price, reflecting investor optimism about AWS’s enhanced position. The perception that Amazon has secured a “backbone” deal for AI infrastructure helped drive positive sentiment. (AP News)

4. Timeline & Key Milestones

-

Immediately: OpenAI begins utilising AWS infrastructure under the deal. (Anadolu Ajansı)

-

By end of 2026: Full capacity target deployment (hundreds of thousands of GPUs) in AWS data centres. (The Peninsula Newspaper)

-

Beyond 2027: Potential expansion phase, additional capacity, optional growth clauses built into contract. (theregister.com)

5. Risks & Considerations

-

Concentration risk: If OpenAI becomes heavily reliant on one cloud provider (AWS) for mission-critical workloads, it might face negotiating leverage or reliability risk.

-

Infrastructure spend vs. monetisation: Huge infrastructure commitments only make sense if OpenAI’s AI products generate sufficient revenue growth and profitability to justify the compute spend. Some analysts warn of an “AI infrastructure bubble”. (spokesman.com)

-

Competitive responses: Rival cloud providers (Azure, Google Cloud) may respond aggressively, potentially leading to price wars, margin pressure, or differentiated service offerings.

-

Chip supply & cost risk: If Nvidia (or other hardware vendors) can't meet demand, or if pricing escalates, it could raise costs for AWS/OpenAI beyond expectations.

-

Regulatory scrutiny: Large-scale cloud deals and dominance of one provider in AI infrastructure might attract antitrust or national security oversight depending on jurisdiction.

Summary & Takeaway

The $38 billion, seven-year contract between OpenAI and AWS, anchored around Nvidia GPU delivery, is a landmark in the AI infrastructure domain. It signals a shift in the competitive landscape of cloud computing, re-positions Amazon’s AWS as a core player in high-end AI workloads, and underscores the rising centrality of compute hardware (Nvidia chips) in the AI ecosystem.

From an economic standpoint, the deal offers AWS a major revenue opportunity and opens strategic advantages; for OpenAI, it provides the raw compute scale needed for next-gen AI ambitions — but also commits it to high infrastructure burden with attendant risk.

Investors and market watchers will focus not just on the headline value, but on how quickly the deal translates into operational deployment, revenue growth, margin improvement, and whether this form of compute-heavy investment remains sustainable in the long term.

إرسال تعليق